How the English language changes! Most of us think of crawlers as very slow creatures. Like babies crawling or night crawlers that are slow by nature. However, when it comes to the internet, crawlers are anything but slow and desire websites to be extremely fast.

Web bots that have been created to crawl the internet looking for websites during search have changed our definition of crawling and why your website needs to respond quickly in order to get high rankings.

Let’s talk about Website speed and website page loading speed. For the sake of this article, let’s just look at website speed and page speed on the internet as one and the same. I say this because when searching the internet, humans can only look at one page at a time.

It is the speed at which that page loads that is important for web bots and humans to stay interested in the content of that page. For humans, if it takes more than 2 seconds to load a page, they are moving on to the next search. Oddly enough mobile phone users are a little more forgiving and will allow maybe 6 seconds to 10 seconds based on the same research.

If internet users are doing keyword searches to take care of whatever it is they are looking for, and you have great keyword optimization going on, you would think that you would rank high! However, if your site takes too long to load, they are gone and you have lost sales!

Of course, it gets even trickier when the Web crawlers and spiders are taken into consideration. An almost counter-intuitive effect takes place when page load time SEO is moving too fast. You might get a warning to slow it down a little bit. Huh?!?

A crawler has to access the code on a page to analyze it for SEO. Every time a web page is accessed by the crawler as a result of searches that are requested by real humans across the globe, it puts a strain on the host server of your website. So if too many requests for the same page are called in a very narrow time, then the server that is handling the web page will get blocked. So now we have a quandary. So both humans and bots want your page to load quickly, but if too many requests for your page happen too quickly (this is what you want) for the server to handle, then you get blocked.

Consequently, there is an optimum delay between requests for your website for the crawlers to crawl. So now we have two issues going on, Website load speed (along with page load time SEO) and the short delay between page requests relative to the server you have for your website to handle the crawl speed.

Website Speed for Optimum SEO

In life and on the internet, very few things are just set it and forget it. Most things in life require constant maintenance, review, and adjustment. Your Website speed for the optimum SEO is no different. It takes constant vigilance.

Most hosted servers will allow you to set a few things when it comes to crawlers. One of these is the crawler thread. This is defined as the number of threads that crawlers will run concomitantly on your website during a search. This crawler thread can be set by the website owner and is by default between values of 5 to 10. So for example, if you set the crawler thread for 8, then you are allowing only 8 simultaneous threads or crawls to your website at the same time.

You will have to experiment with this to get it to work well as you try to address the quandary noted above. This takes some time to do manually. We will address that shortly.

In addition, in your robots.txt file, you can actually give a specific command to the bots to tell them how much delay you want between search requests. Another way to say this is the exact speed you want the crawlers to operate is a command that they will adhere to for most crawlers (unless they are spam crawlers).

In the robots.txt file it would look like this:

User-agent: [name of crawler]

Crawl-delay: 5

This tells the specific crawler to wait 5 seconds for each request of the server. Now understand, based on your crawl thread you can have many searches going on at the same time, you are just here instructing the time to wait between searches.

If you want to have one general command on your robots.txt file for all crawlers it would look like this:

User-agent: *

Crawl-delay: 5

Of course, if you did that, then you will be slowing down essential search engines such as Google Bot and Bing Bot. So it may be a good idea to specify which SEO bots you want to give this command to. So all this talk about Website load speed and page load time SEO could appear to be quite cumbersome! If fact, it is borderline rocket science for the novice and challenging even for the most experienced Website hosts, owners, and webmasters.

You at least have the background information needed to understand what Website load speed is and how it operates from a high altitude observation. So to help you truly optimize your site, you are going to have to analyze it and adjust it from time to time.

How to help the Crawlers help you improve your SEO Website’s Load Speediness!

Of course, since this is currently the SEO environment that we all live and work in, then it makes sense to use the way crawlers crawl to our advantage. This is paramount in finding and attracting consumers to your website.

The best way to do this is to have it professionally analyzed constantly for you to know exactly what should be done to maximize crawlers and human searches to your advantage. Our professional service is On page SEO checker.

You simply go there and put in your website's URL address and get free On page SEO report about your site. It is very comprehensive. For example we used Fox News for this report and here is the link: Scan Back Links SEO report for Fox News. Just click the link to see it.

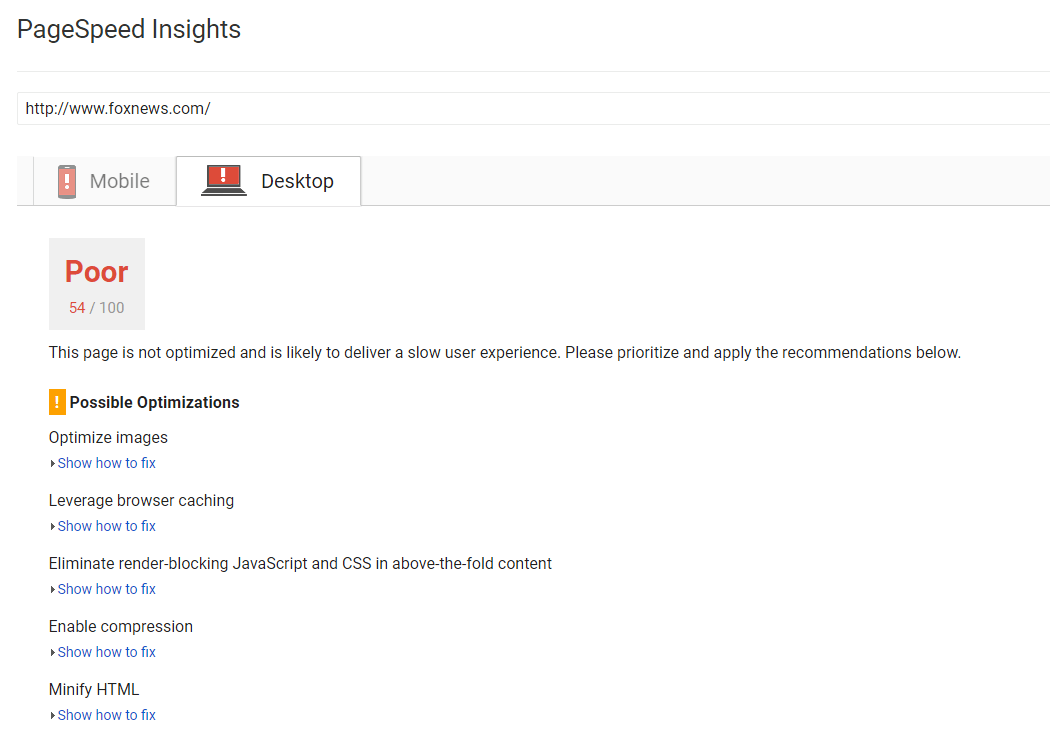

The report is broken down into categories. For our purposes we cut and paste the section on site speed here:

|

Site Speed |

|

|

HTML Compression/ GZIP Test Importance: 5 |

Your web page has been compressed successfully by gzip compression application on your code. There has been a compression of your HTML from 69.23 kb to 14.22 (saving you 79%). This will ensure a speedier loading of web pages and faster user experience. |

|

Page Cache Test Importance: 5 |

There is a caching mechanism on your website. Caching will speed up page-loading interval and reduce server loads. |

|

JS Minification Importance: 4 |

Some JavaScript files on your website are not minified.

|

|

CSS Minification Importance: 4 |

The CSS files on your website are minified |

This is just one of the sections but it makes it clear the variables that affect your website’s page load speed. ScanBacklinks is in initial stage of development and is focusing on becoming a leader in helping Website owners and Webmasters rank high in Search Engines. ScanBacklinks focus on backlinks to other pages and other websites and how On page optimisation affects your SEO rankings.

You can sign up for free at this time. Once signed in you can get a detailed report after you put in your URL address and have them analyze it. This report is generated within a minute.

After you put in your URL and get report, the next step is to implement suggestions on your site and then watch your rankings and website load speed increase and sales go up. As with anything in life, knowledge is useless unless it is implemented by wisdom. So getting the report will not change anything unless you implement it.

You can also try using Google Page Speed Developers. You can also have your website analyzed here. Take the time to read the frequently asked questions under their support tab so you understand better how they analyze to see if it is a good fit for your business and your Website.

Have a question? Write them in the comments below!

We encourage feedback, questions, and comments from our readers. If this article has been helpful or you have more questions, please feel free to let us know. Your input is greatly appreciated and helps us help all of you in the future.